Trial 1

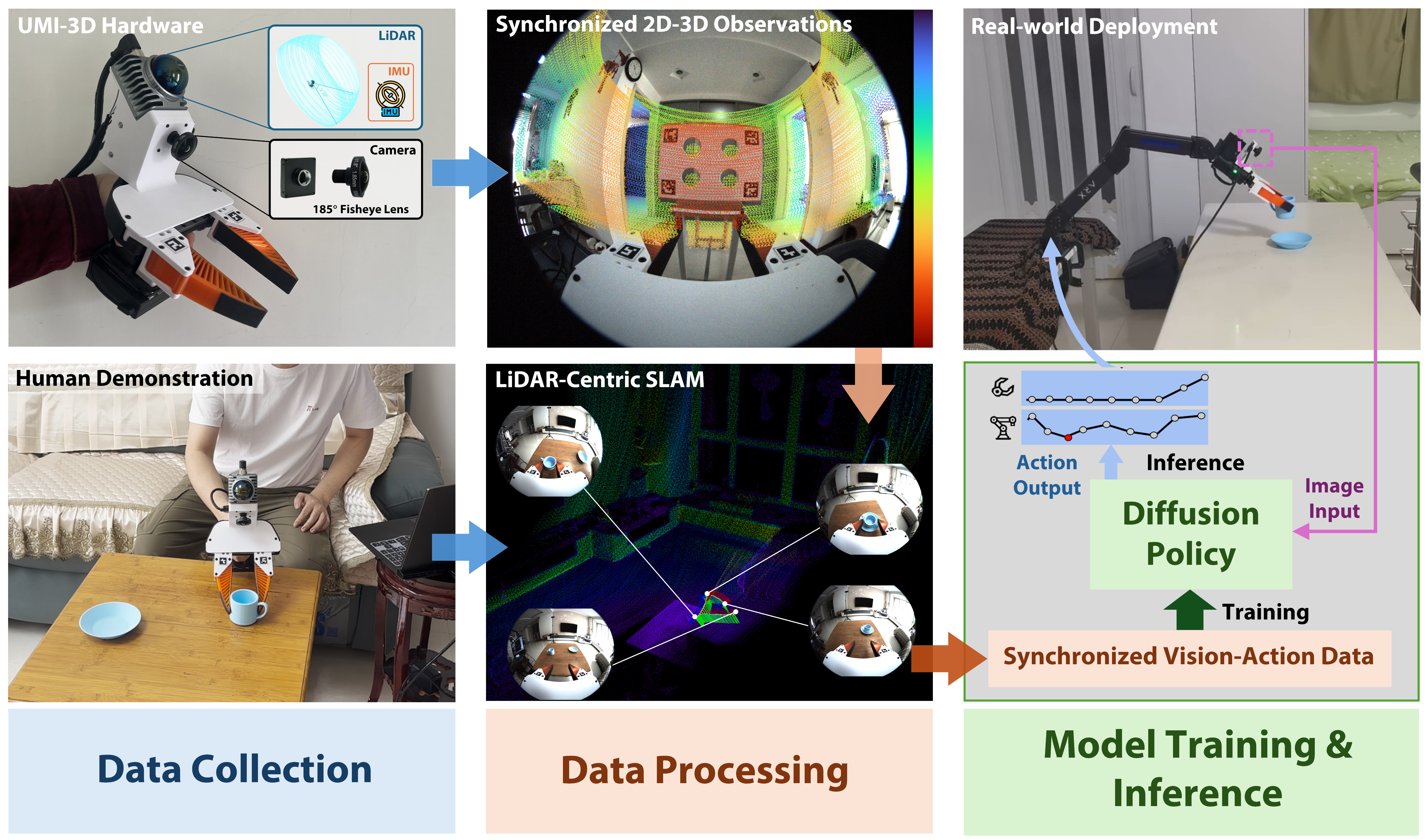

UMI-3D

Extending UMI from Vision-Limited to 3D Spatial Perception

When Vision Fails,

LiDAR Takes Over.

Cost < 5000 RMB (700 $).

Robust SLAM.

No External Infrastructure.

Unfazed

by Occlusion, Dynamics, and Tracking Loss.

Fully Open-Source.

Building Embodied Intelligence

at Home

.

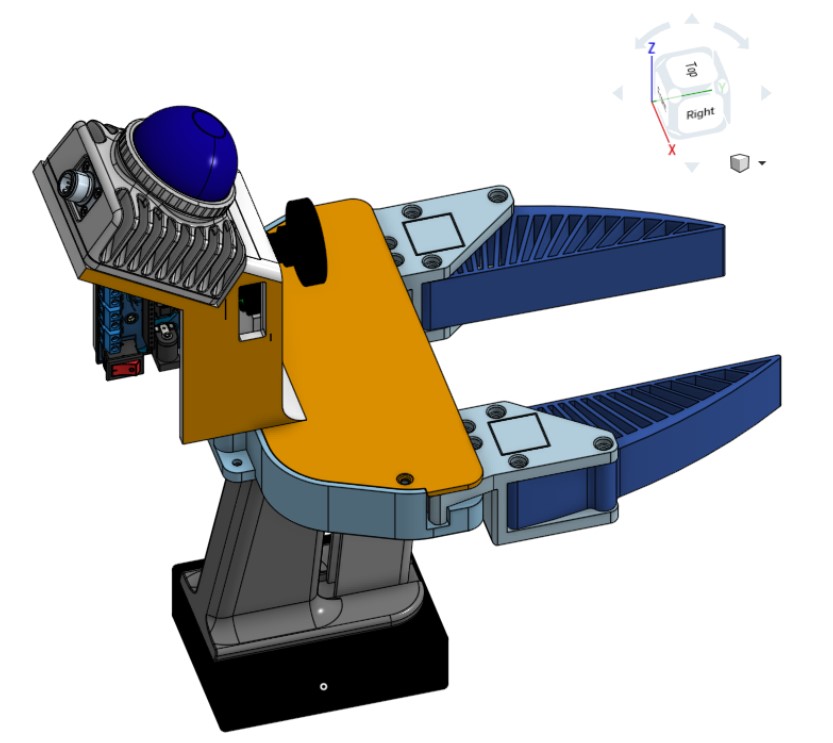

Hardware Design

Real Hardware

CAD Overview

SLAM & Data Processing

White Wall (weak texture)

Curtain (weak texture, dynamic objects, light changes)

Sliding Door (dynamic objects, light changes, precise operation)

Policy Training & Deployment

Task 1 — Cup → Saucer Placement ☕️

Training Data

All Experiments and Scores

Generalization Results

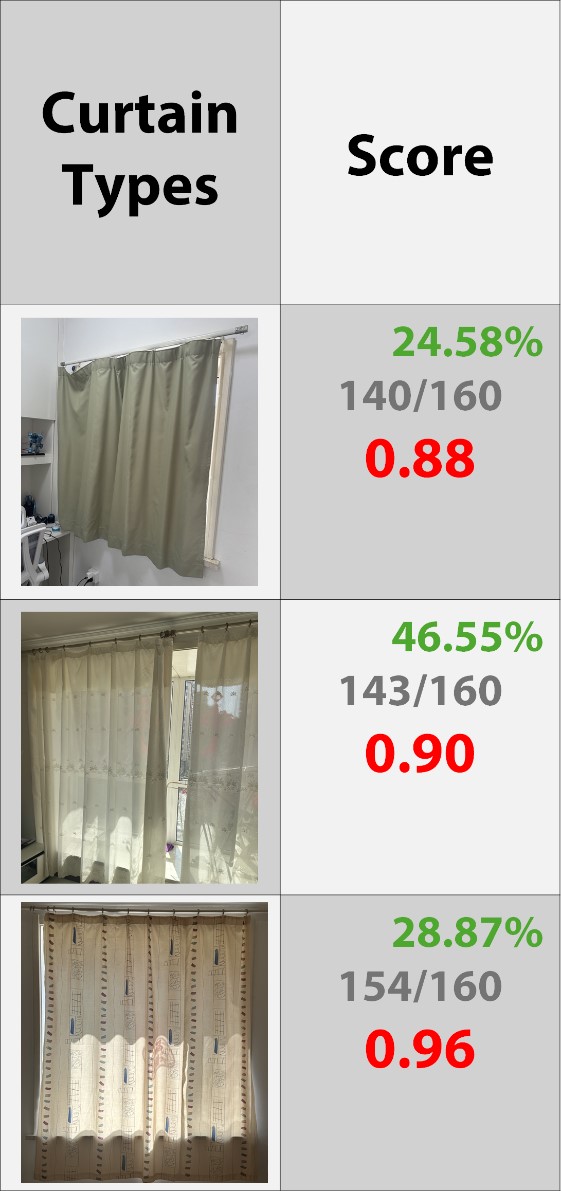

Task 2 — Curtain Pulling 🎪

All Experiments and Scores

Generalization Results

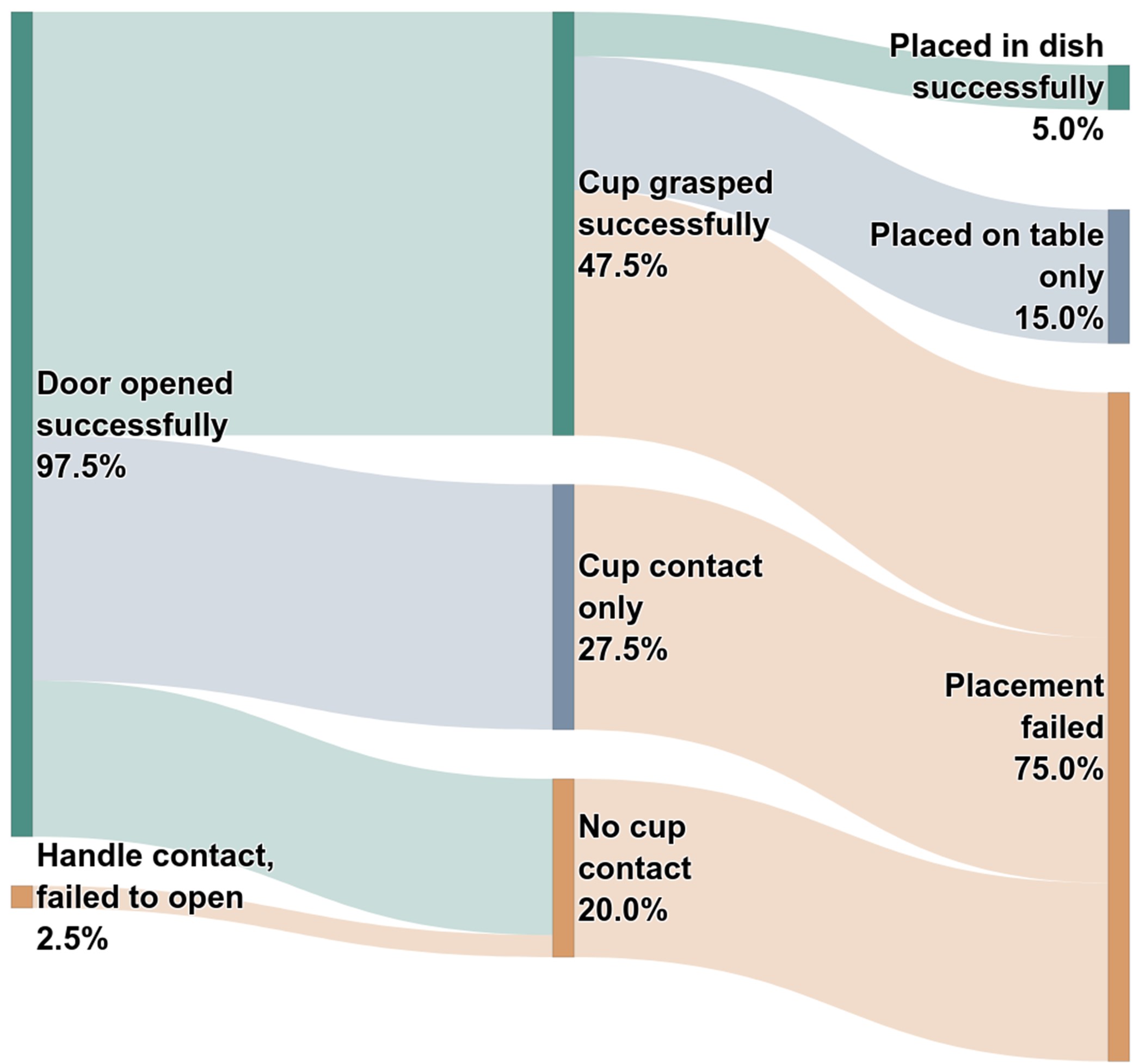

Task 3 — Open Sliding Door → Grasp Cup → Place on Table 🚪☕️⛩

All Experiments and Scores

Failure Propagation

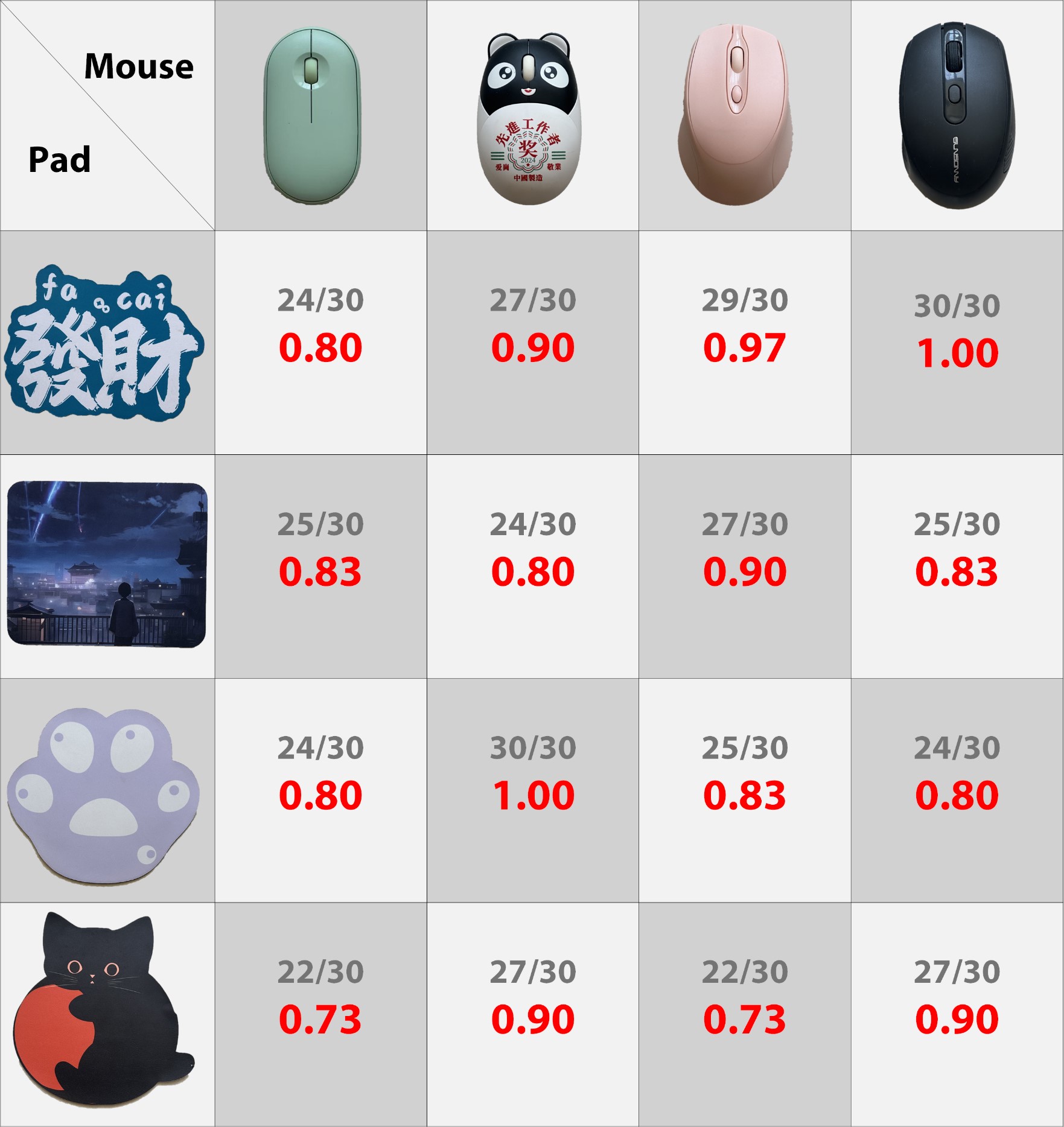

Task 4 — Mouse → Pad 🖱️ (Verify the compatibility between UMI-3D and the original UMI)

All Experiments and Scores

Generalization Results

Dataset & Models

Team

1 The University of Hong Kong 2 University of Science and Technology of China 3 Physical Intelligence Laboratory

Paper

Latest version: arXiv or here.

BibTeX

@misc{wang2026umi3dextendinguniversalmanipulation,

title={UMI-3D: Extending Universal Manipulation Interface from Vision-Limited to 3D Spatial Perception},

author={Ziming Wang},

year={2026},

eprint={2604.14089},

archivePrefix={arXiv},

primaryClass={cs.RO},

url={https://arxiv.org/abs/2604.14089},

}Acknowledgements

The author would like to thank Prof. Fu Zhang and Dr. Zhengrong Xue for their insightful and constructive discussions. The author also gratefully acknowledges Prof. Yanyong Zhang for providing essential computational resources that enabled this work.

Contact

If you have any questions, please feel free to contact Ziming Wang.

Website template borrowed from

UMI

.